The New York Times ushered in the New Year with a lawsuit against OpenAI and Microsoft. The paper covered the suit, fittingly, as a major business story. OpenAI and its Microsoft patron had, according to the filing, stolen “millions of The Times’ copyrighted news articles, in-depth investigations, opinion pieces, reviews, how-to guides,” and more—all to train OpenAI’s large language models (LLMs). The Times sued to stop the tech companies’ “free-ride” on the newspaper’s “uniquely valuable” journalism.

OpenAI and Microsoft have, of course, cited fair use to justify their permissionless borrowing. Across 70 bitter pages, the Times’ lawyers drove a bulldozer through all four factors that U.S. judges weigh for fair use. The brief also points to reputational harm—from made-up responses that ChatGPT or Bing Chat attribute to the Times: “In plain English, it’s misinformation.”

There’s no question that attorneys for Elsevier and the other scholarly-publishing giants are reading the Times filing carefully. They’ll notice a leitmotif: The newspaper’s expensively reported stories produce trusted knowledge, in otherwise short supply. In a “damaged information ecosystem […] awash in unreliable content,” the Times’ journalism is an “exceptionally valuable body of data” for AI training, states the filing. Other news organizations have the same view; some have signed licensing deals, while others are negotiating with OpenAI and its peers. No more free rides.

The big scholarly publishers are very likely to agree. And they’re sitting on the other corpus of vetted knowledge, science and scholarship. So licensing talks are almost certainly underway, with threats and lawsuits doubtlessly prepped. At the same time, the commercial publishers are building their own AI products. In the year since ChatGPT’s splashy entrance, at least three of the big five scholarly publishers, plus Clarivate, have announced tools and features powered by LLMs. They are joined by dozens of VC-backed startups—acquisition targets, one and all—promising an AI boost across the scholarly workflow, from literature search to abstract writing to manuscript editing.

Thus the two main sources of trustworthy knowledge, science and journalism, are poised to extract protection money—to otherwise exploit their vast pools of vetted text as “training data.” But there’s a key difference between the news and science: Journalists’ salaries, and the cost of reporting, are covered by the companies. Not so for scholarly publishing: Academics, of course, write and review for free, and much of our research is funded by taxpayers. The Times suit is marinated in complaints about the costly business of journalism. The likes of Taylor & Francis and Springer Nature won’t have that argument to make. It’s hard to call out free-riding when it's your own business model.

Surveillance Publishing, LLM Edition

The AI hype-cycle froth has come for scholarly publishing. The industry’s feverish—if mostly aspirational—embrace of AI should be read as the latest installment of an ongoing campaign.[1] Led by Elsevier, commercial publishers have, for about a decade, layered another business on top of their legacy publishing operations. That business is to mine and process scholars’ works and behavior into prediction products, sold back to universities and research agencies. Elsevier, for example, peddles a dashboard software, Pure, to university assessment offices—one that assigns each of the school’s researchers a Fingerprint® of weighted keywords. The underlying data comes from Elsevier’s Scopus, the firm’s propriety database of abstracts and citations. Thus the scholar is the product: Her articles and references feed Scopus and Pure, which are then sold back to her university employer. That same university, of course, already shells out usurious subscription and APC dollars to Elsevier—which, in a painful irony, have financed the very acquisition binge that transformed the firm into a full-stack publisher.

Elsevier and the other big publishers are, to borrow Sarah Lamdan’s phrase, data cartels. I’ve called this drive to extract profit from researchers’ behavior surveillance publishing—by analogy to Shoshana Zuboff’s notion of surveillance capitalism, in which firms like Google and Meta package user data to sell to advertisers. The core business strategy is the same for Silicon Valley and Elsevier: extract data from behavior to feed predictive models that, in turn, get refined and sold to customers. In one case it’s Facebook posts and in the other abstracts and citations, but either way the point is to mint money from the by-products of (consumer or scholarly) behavior. One big difference between the big tech firms and the publishers is that Google et al entice users with free services like Gmail: If you’re not paying for it, the adage goes, then you’re the product. In the Elsevier case we’re the product and we’re paying (a lot) for it.

Elsevier and some of the other big publishers already harness their troves of scholarly data to, for example, assign subject keywords to scholars and works. They have, indeed, been using so-called AI for years now, including variations on the machine-learning (ML) techniques ascendant in the last 15 years or so. What’s different about the publishers’ imminent licensing windfall and wave of announced tools is, in a word, ChatGPT. It’s true that successive versions of enormous, “large language” models from OpenAI, Google, and others have been kicking around in commercial and academic circles for years. But the November 2022 public release of ChatGPT changed the game. Among other things, and almost overnight, the value of content took on a different coloration. Each of the giant “foundation” models, including OpenAI’s GPT series, is fed on prodigious helpings of text. The appetite for such training data isn’t sated, even as the legality of the ongoing ingestion is an open and litigated question.

The big publishers think they’re sitting on a gold mine. It’s not just their paywalled, full-text scholarship, but also the reams of other data they hoover up from academics across their platforms and products. In theory at least, their proprietary content is—unlike the clown show of the open web—vetted and linked. On those grounds, observers have declared that publishers may be the “biggest winners” in the generative AI revolution. Maybe. But either way, expect Springer Nature, Taylor & Francis, Elsevier, Wiley, and SAGE to test the theory.

Hallucinating Parrots

Truly large language models, like those driving ChatGPT and Bard, are notorious fabulists. They routinely, and confidently, return what the industry euphemism terms “hallucinations.” Some observers expect the problem to keep getting worse, as LLM-generated material floods the internet. The big models, on this fear, will feed on their own falsehood-ridden prose in subsequent training rounds—a kind of large-language cannibalism that, over time, could crowd out whatever share of the pre-LLM web that was more-or-less truthful.

One solution to the problem, with gathering VC and hype-cycle momentum, is a turn to so-called “small” language models. The idea is to apply the same pattern-recognition techniques, but on curated, domain-specific data sets. One advantage of the smaller models, according to proponents, is their ability to restrict training data to the known and verifiable. The premise is that, with less garbage in, there will be less garbage out.

So it’s no surprise that the published scientific record has emerged, in the industry chatter, as an especially promising hallucination-slayer. Here’s a body of vetted knowledge, the thinking goes, cordoned off from the internet’s Babelist free-for-all. What makes the research corpus different is, well, peer review and editorial gate-keeping, together with citation conventions and scholars’ putative commitment to a culture of self-correcting criticism. Thus the published record is—among bodies of minable text—uniquely trustworthy. Or that’s what small-language evangelists are claiming.

Enter Elsevier and its oligopolistic peers. They guard (with paywalled vigilance) a large share of published scholarship, much of which is unscrapable. A growing proportion of their total output is, it’s true, open access, but a large share of that material carries a non-commercial license. Standard OA agreements tend to grant publishers blanket rights, so they have a claim—albeit one contested on fair-use grounds by OpenAI and the like—to exclusive exploitation. Even the balance of OA works that permit commercial re-use are corralled with the rest, on propriety platforms like Elsevier’s ScienceDirect. Those platforms also track researcher behavior, like downloads and citations, that can be used to tune their models’ outputs. Such models could, in theory, be fed by proprietary bibliographic platforms, such as Clarivate’s Web of Science, Elsevier’s Scopus, and Digital Science’s Dimensions (owned by Springer Nature’s parent company).

‘The World’s Largest Collection’

One area where a number of big publishers are already jumping in is search-based summary. Elsevier is piloting Scopus AI, with an early 2024 launch expected. Researchers type in natural-language questions, and they get a summary spit out, with some suggested follow up questions and references—those open a ScienceDirect view in the sidebar. ScopusAI results also include a “Concept Map”—an expandable topic-based tree, presumably powered by the firm’s Fingerprint keywords.

The tool is combing its Scopus titles and abstracts—from 2018 on—and then feeding the top 10 or so results into an OpenAI GPT model for summarizing. Elsevier isn’t shy about its data-trove advantage: Scopus AI is “built on the world’s largest collection of trusted peer-reviewed academic literature,” proclaims a splashy promotional video.

Springer Nature and Clarivate are also in on the search-summary game. Dimensions, the Scopus competitor from Springer Nature’s corporate sibling, has a Dimensions AI Assistant in trials. Like Scopus AI, the Dimensions tool is retrieving a small number of abstracts based on conversational search, turning to models from OpenAI and Google for the summaries.

Meanwhile, Clarivate—which owns Web of Science and ProQuest—has struck a deal with AI21 Labs, an Israeli LLM startup (tagline: “When Machines Become Thought Partners”). Using Clarivate’s “trusted content as the foundation,” AI21 promises to use its models to generate “high-quality, contextual-based answers and services,” with what it frankly calls “Clarivate’s troves of content and data.”

The big firms will be competing with a stable of VC-backed startups, including Ought (“Scale up good reasoning”), iris.ai (“The Researcher Workspace”), SciSummary (“Use AI to summarize scientific articles in seconds”), Petal (“Chat with your documents”), Jenni (“Supercharge Your Next Research Paper”), scholarcy (“The AI-powered article summarizer”), Imagetwin (“Increase the Quality in Science”), keenious (“Find research relevant to any document!”), and Consensus (“AI Search Engine for Research”).

An open question is if the startups can compete with the big publishers; many are using the open access database Semantic Scholar, which excludes the full-text of paywalled articles. They’ve won plenty of venture-capital backing, but—if the wider AI industry is any guide—the startups will face an uphill climb to stay independent. Commercial AI, after all, is dominated by a handful of giant U.S. and Chinese corporations, nearly all big tech incumbents. The industry has ferocious economies of scale, largely because model-building takes enormous financial and human resources.

The big publishers may very well find themselves in a similar pole position. The firms’ stores of proprietary full-text papers and other privately held data are a built-in advantage. Their astronomical margins on legacy subscription-and-APC publishing businesses means that they have the capital at hand to invest and acquire. Elsevier’s decade-long acquisition binge was, in that same way, financed by its lucrative earnings. There’s every reason to expect that the company will fund its costly LLM investments from the same surplus; Elsevier’s peers are likely to follow suit. Thus universities and taxpayers are serving, in effect, as a capital fund for AI products that, in turn, will be sold back to us. The independent startups may well be acquired along the way. The giant publishers themselves may be acquisition targets to the even-larger Silicon Valley firms hungry for training data—as Avi Staiman recently observed in The Scholarly Kitchen.

The acquisition binge has already begun. In October Springer Nature acquired the Science division of Slimmer AI, a Dutch “AI venture studio” that the publisher has worked with since 2015 on peer review and plagiarism-detection tools. Digital Science, meanwhile, just bought Writefull, which makes an academic writing assistant (to join corporate sibling Springer Nature’s recently announced Curie). Digital Science pitched the acquisition as a small-language model play: “While the broader focus is currently on LLMs,” said a company executive in the press release, “Writefull’s small, specialized models offer more flexibility, at lower cost, with auditable metrics.” Research Solutions, a Nevada company that sells access to the big commercial publishers’ paywalled content to corporations, recently bought scite, a startup whose novel offering—citations contexts—has been repackaged as “ChatGPT for Science.”

Fair Use?

As the Times lawsuit suggests, there’s a big legal question mark hovering over the big publishers’ AI prospects. The key issue, winding its way through the courts, is fair use: Can the likes of OpenAI scrape up copyrighted content into their models, without permission or compensation? The Silicon Valley tech companies think so; they’re fresh converts to fair-use maximalism, as revealed by their public comments filed with the US Copyright Office. The companies’ “overall message,“ reported The Verge in a round-up, is that they “don’t think they should have to pay to train AI models on copyrighted work.” Artists and other content creators have begged to differ, filing a handful of high-profile lawsuits.

The publishers haven’t filed their own suits yet, but they’re certainly watching the cases carefully. Wiley, for one, told Nature that it was “closely monitoring industry reports and litigation claiming that generative AI models are harvesting protected material for training purposes while disregarding any existing restrictions on that information.” The firm has called for audits and regulatory oversight of AI models, to address the “potential for unauthorised use of restricted content as an input for model training.“ Elsevier, for its part, has banned the use of “our content and data” for training; its sister company LexisNexis, likewise, recently emailed customers to “remind” them that feeding content to “large language models and generative AI” is forbidden. CCC (née Copyright Clearance Center), in its own comments to the US Copyright Office, took a predictably muscular stance on the question:

There is certainly enough copyrightable material available under license to build reliable, workable, and trustworthy AI. Just because a developer wants to use “everything” does not mean it needs to do so, is entitled to do so, or has the right to do so. Nor should governments and courts twist or modify the law to accommodate them.

The for-profit CCC is the publishing industry’s main licensing and permission enforcer. Big tech and the commercial publishing giants are already maneuvering for position. As Joseph Esposito, a keen observer of scholarly publishing, put the point: “scientific publishers in particular, may have a special, remunerative role to play here.”

One near-term consequence may be a shift in the big publishers’ approach to open access. The companies are already updating their licenses and terms to forbid commercial AI training—for anyone but them, of course. The companies could also pull back from OA altogether, to keep a larger share of exclusive content to mine. Esposito made the argument explicit in a recent Scholarly Kitchen post: “The unfortunate fact of the matter is that the OA movement and the people and organizations that support it have been co-opted by the tech world as it builds content-trained AI.“ Publishers need “more copyright protection, not less,“ he added. Esposito’s consulting firm, in its latest newsletter, called the liberal Creative Commons BY license a “mechanism to transfer value from scientific and scholarly publishers to the world’s wealthiest tech companies.“ Perhaps, though I would preface the point: Commercial scholarly publishing is a mechanism to transfer value from scholars, taxpayers, universities to the world’s most profitable companies.

The Matthew Effect in AI

There are a hundred and one reasons to worry about Elsevier mining our scholarship to maximize its profits. I want to linger on what is, arguably, the most important: the potential effects on knowledge itself. At the core of these tools—including a predictable avalanche of as-yet-unannounced products—is a series of verbs: to surface, to rank, to summarize, and to recommend. The object of each verb is us—our scholarship and our behavior. What’s at stake is the kind of knowledge that the models surface, and whose knowledge.

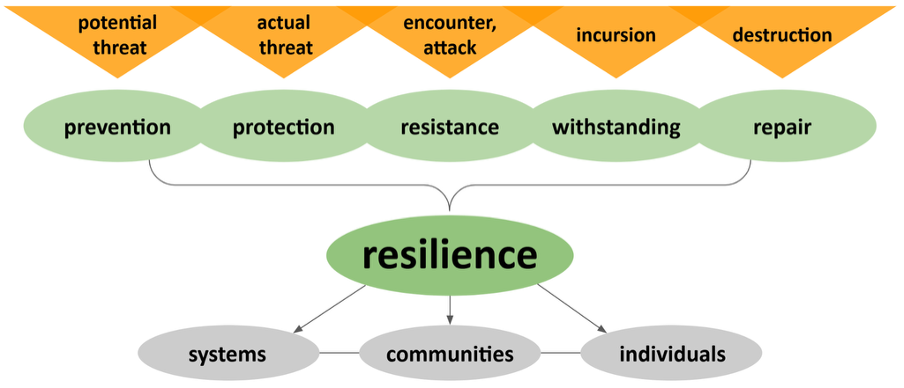

AI models are poised to serve as knowledge arbitrators, by picking winners and losers according to what they make visible. There are two big and interlocking problems with this role: The models are trained on the past, and their filtering logic is inscrutable. As a result, they may smuggle in the many biases that mark the history of scholarship, around gender, geography, and other lines of difference. In this context it’s useful to revive an old concept in the sociology of science. According to the Matthew Effect—named by Robert Merton decades ago—prominent and well-cited scholars tend to receive still more prominence and citations. The flip side is that less-cited scholars tend to slip into further obscurity over time. (“For to every one who has will more be given, and he will have abundance; but from him who has not, even what he has will be taken away”— Matthew 25:29.) These dynamics of cumulative advantage have, in practice, served to amplify the knowledge system’s patterned inequalities—for example, in the case of gender and twentieth-century scholarship, aptly labeled the Matilda Effect by Margaret Rossiter.

The deployment of AI models in science, especially proprietary ones, may produce a Matthew Effect on the scale of Scopus, and with no paper trail. The problem is analogous to the well-documented bias-smuggling with existing generative models; image tools trained on, for example, mostly white and male photos reproduce the skew in their prompt-generated outputs. With our bias-laden scholarship as training data, the academic models may spit out results that, in effect, double-down on inequality. What’s worse is that we won’t really know, due to the models’ black-boxed character. Thus the tools may act as laundering machines—context-erasing abstractions that disguise their probabilistic “reasoning.” Existing biases, like male academics’ propensity for self-citation, may win a fresh coat of algorithmic legitimacy. Or consider center-periphery dynamics along North-South and native-English-speaking lines: Gaps traceable to geopolitical history, including the legacy of European colonialism, may be buried still deeper. The models, in short, could serve as privilege multipliers.

The AI models aren’t going away, but we should demand that—to whatever extent possible—the tools and models are subject to scrutiny and study. This means ruling out propriety products, unless they can be pried open by law or regulation. We should, meanwhile, bring the models in-house, within the academic fold, using mission-aligned collections like the Open University’s CORE and the Allen Institute’s Semantic Scholar. Academy-led efforts to build nonprofit models and tools should be transparent, explainable, and auditable.

Stop Tracking Scholarship

These are early days. The legal uncertainty, the vaporware, the breathless annual-report prose: All of it points to aspiration and C-suite prospecting. We’re not yet living in a world of publisher small-language models, trained on our work and behavior.

Still, I’m convinced that the big five publishers, plus Clarivate, will make every effort to pad their margins with fresh AI revenue. My guess is that they’ll develop and acquire their way to a product portfolio up, down, and around the research-lifecycle, on Elsevier’s existing full-stack model. After all—and depending on what we mean by AI—the commercial publishers have been rolling out AI products for years. Every signal suggests they’ll pick up the pace, with hype-driven pursuit of GPT-style language models in particular. They’ll sell their own products back to us, and—I predict—license our papers out to the big foundation models, court-willing.

So it’s an urgent task to push back now, and not wait until after the models are trained and deployed. What’s needed is a full-fledged campaign, leveraging activism and legislative pressure, to challenge the commercial publishers’ extractive agenda. One crucial framing step is to treat the impending AI avalanche as continuous with—as an extension of—the publishers’ in-progress mutation into surveillance-capitalist data businesses. The surveillance publisher era was symbolically kicked off in 2015, when Reed-Elsevier adopted its “shorter, more modern name” RELX Group to mark its “transformation” from publisher to “technology, content and analytics driven business.” They’ve made good on the promise, skimming scholars’ behavioral cream with product-by-product avidity. Clarivate and Elsevier’s peers have followed their lead.

Thus the turn to AI is more of the same, only more so. The publishers’ cocktail of probability, prediction, and profit is predicated on the same process: extract our scholarship and behavior, then sell it back to us in congealed form. The stakes are higher given that some of the publishers are embedded in data-analytics conglomerates—RELX (Elsevier) and Informa (Taylor & Francis), joined by publisher-adjacent firms like Clarivate and Thomson Reuters. Are the companies cross-pollinating their academic and “risk solutions” businesses? RELX’s LexisNexis sold face-tracking and other surveillance tools to the US Customs and Border Protection last year, as The Intercept recently reported. As SPARC (the library alliance) put it in its November report on Elsevier’s ScienceDirect platform: “There is little to nothing to stop vendors who collect and track [library] patron data from feeding that data—either in its raw form or in aggregate—into their data brokering business.”

So far the publishers’ data-hoovering hasn’t galvanized scholars to protest. The main reason is that most academics are blithely unaware of the tracking—no surprise, given scholars’ too-busy-to-care ignorance of the publishing system itself. The library community is far more attuned to the unconsented pillage, though librarians—aside from SPARC—haven’t organized on the issue. There have been scattered notes of dissent, including a Stop Tracking Science petition, and an outcry from Dutch scholars on a 2020 data-and-publish agreement with Elsevier, largely because the company had baked its prediction products into the deal. In 2022 the German national research foundation, Deutsche Forschungsgemeinschaft (DFG), released its own report-cum-warning—“industrialization of knowledge through tracking,” in the report’s words. Sharp critiques from, among others Bjorn Brembs, Leslie Chan, Renke Siems, Lai Ma, and Sarah Lamdan have appeared at regular intervals.

None of this has translated into much, not even awareness among the larger academic public. A coordinated campaign of advocacy and consciousness-raising should be paired with high-quality, in-depth studies of publisher data harvesting—on the example of SPARC’s recent ScienceDirect report. Any effort like this should be built on the premise that another scholarly-publishing world is possible. Our prevailing joint-custody arrangement—for-profit publishers and non-profit universities—is a recent and reversible development. There are lots of good reasons to restore custody to the academy. The latest is to stop our work from fueling the publishers’ AI profits.

Acknowledgments

A peer-reviewed version of this blog post has been published in KULA.

The term itself is misleading, though now unavoidable. By AI (artificial intelligence), I am mostly referring to the bundle of techniques now routinely grouped under the “machine learning” label. There is an irony in this linguistic capture. For decades after its coinage in the mid-1950s, “artificial intelligence” was used to designate a rival approach, grounded in rules and symbols. What most everyone now calls AI was, until about 30 years ago, excluded from the club. The story of how neural networks and other ML techniques won admission has not yet found its chronicler. What’s clear is that a steep funding drop-off in the 1980s (the so-called “AI winter”) made the once-excluded machine-learning rival—its predictive successes on display over subsequent decades—a very attractive aide to winning back the grant money. This essay is based on an invited talk delivered for Colgate University’s Horizons colloquium series in October 2023. ↩︎

Copyright © 2024 Jeff Pooley. Distributed under the terms of the Creative Commons Attribution 4.0 License.